Mend.io introduces two practical tools designed to help teams quickly assess AI maturity, measure AI-related risk, and collect the evidence leadership will expect in 2026:

- an interactive AI Security Maturity Survey (including a personalized score and mapped recommendations)

- a companion AI Security Compliance Checklist.

Both tools align with recognized industry standards. They are structured for immediate use in discovery sessions, audits, and planning activities.

Why this matters right now

Regulatory requirements and auditor expectations are evolving rapidly. Frameworks and standards such as OWASP AIMA, NIST AI RMF, ISO/IEC 42001, and the EU AI Act are increasingly used as baseline checklists for evaluating AI programs. Enforcement authorities under the EU AI Act become operational in August 2026. Mandatory incident-reporting obligations are also approaching. Organizations must therefore not only deploy safe AI systems, but also document and demonstrate compliance. The survey and checklist are structured to support this need. They help identify gaps, prioritize remediation, and produce audit-ready documentation.

1. Interactive AI security maturity survey

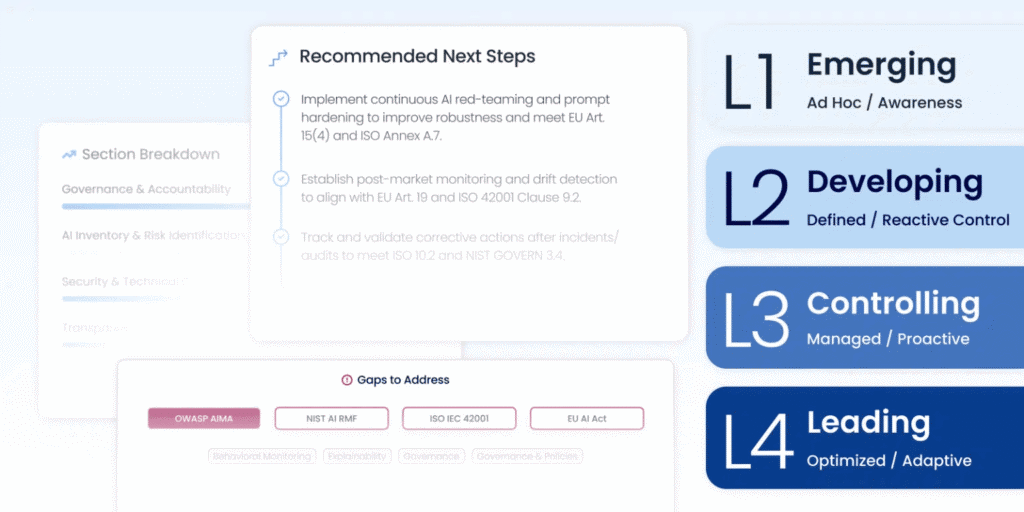

- A concise (~5-minute) self-assessment that measures AI security posture against leading frameworks (OWASP AIMA, NIST AI RMF, ISO 42001, EU AI Act).

- A defined maturity rating (Emerging → Developing → Controlling → Leading) along with a prioritized action roadmap.

- Clear recommendations mapped directly to applicable frameworks, suitable for review by leadership or auditors.

2. AI security compliance checklist

A practical, audit-ready toolkit presented in checklist format. Core sections include:

- Governance & accountability: identification of responsible owners, formal policies, risk registers, and human oversight mechanisms.

- AI inventory & risk identification: development and maintenance of an AI Bill of Materials (AI BoM), structured threat modeling, and pre-deployment risk evaluations.

- Security & technical controls: input and output validation to mitigate prompt injection, access management, encryption, continuous red teaming, and integration into the SDLC.

- Transparency & lifecycle assurance: model cards, decision logging, AIWE (AI Weakness) scoring, runtime monitoring, and bias and fairness assessments.

- Continuous improvement & compliance proof: incident response processes, audit support, corrective action tracking, and post-market monitoring.

The checklist can function as a structured action guide or as a working document during discovery sessions.

Interpreting Your Assessment Results and Defining Next Steps

The survey assigns a position on a four-level maturity scale. Each stage reflects a distinct level of control and readiness. The immediate next step for each stage is outlined below:

- Emerging – No comprehensive inventory, limited governance, minimal behavioral testing.

First move: Establish visibility. Begin compiling an AI BoM to answer a foundational question: “What AI systems are currently in use?” Visibility is essential. Systems cannot be secured if they are not identified.

- Developing – Some policies and manual safeguards exist, but enforcement is inconsistent.

First move: Standardize protective controls. Implement system prompt detection and hardening. Enforce governance policies for LLMs to ensure that development speed does not introduce unmanaged risk.

- Controlling – Formalized controls are in place, automation is partial, and testing is at a foundational level.

First move: Introduce adversarial realism. Transition from periodic assessments to continuous validation. Implement automated red teaming and AIWE scoring to detect behavioral risks.

- Leading – Mature governance structures, continuous monitoring, and documented evidence prepared for auditors.

First move: Demonstrate and formalize assurance. Prioritize scalable reporting, structured audit artifacts, and lifecycle controls to substantiate safety at scale.

What the survey report includes

This tool is structured for use within internal risk reviews or presentation to a governance committee. Upon completion of the assessment, the report provides:

- A defined maturity level with a concise explanation of its implications for the AI program.

- A prioritized remediation list mapped to OWASP AIMA, NIST AI RMF, ISO 42001, and the EU AI Act.

- Action-oriented guidance aligned to the assigned maturity stage (visibility → guardrails → continuous testing → assurance).

For security teams responsible for audits or procurement

When preparing for review by an independent third party or engaging in procurement discussions, the checklist can be used to generate structured audit documentation. Examples include an AI BoM, model cards, operational runbooks covering human oversight and incident response, red team assessment reports, and runtime monitoring logs. These artifacts correspond directly to documentation expected under the EU AI Act and related standards.

Where to start

Take the survey: mend.io/ai-security-survey

This resource supports teams seeking to align existing AI practices with compliance requirements and establish a defensible roadmap. A practical starting point is documenting current AI assets and their usage contexts. Establishing this baseline enables structured risk management and compliance planning.